Continuous validation. Drift detection. Release evidence.

Records deploys adjacent to your FHIR server and answers the question your CDR can't: “Is this data still conformant?”

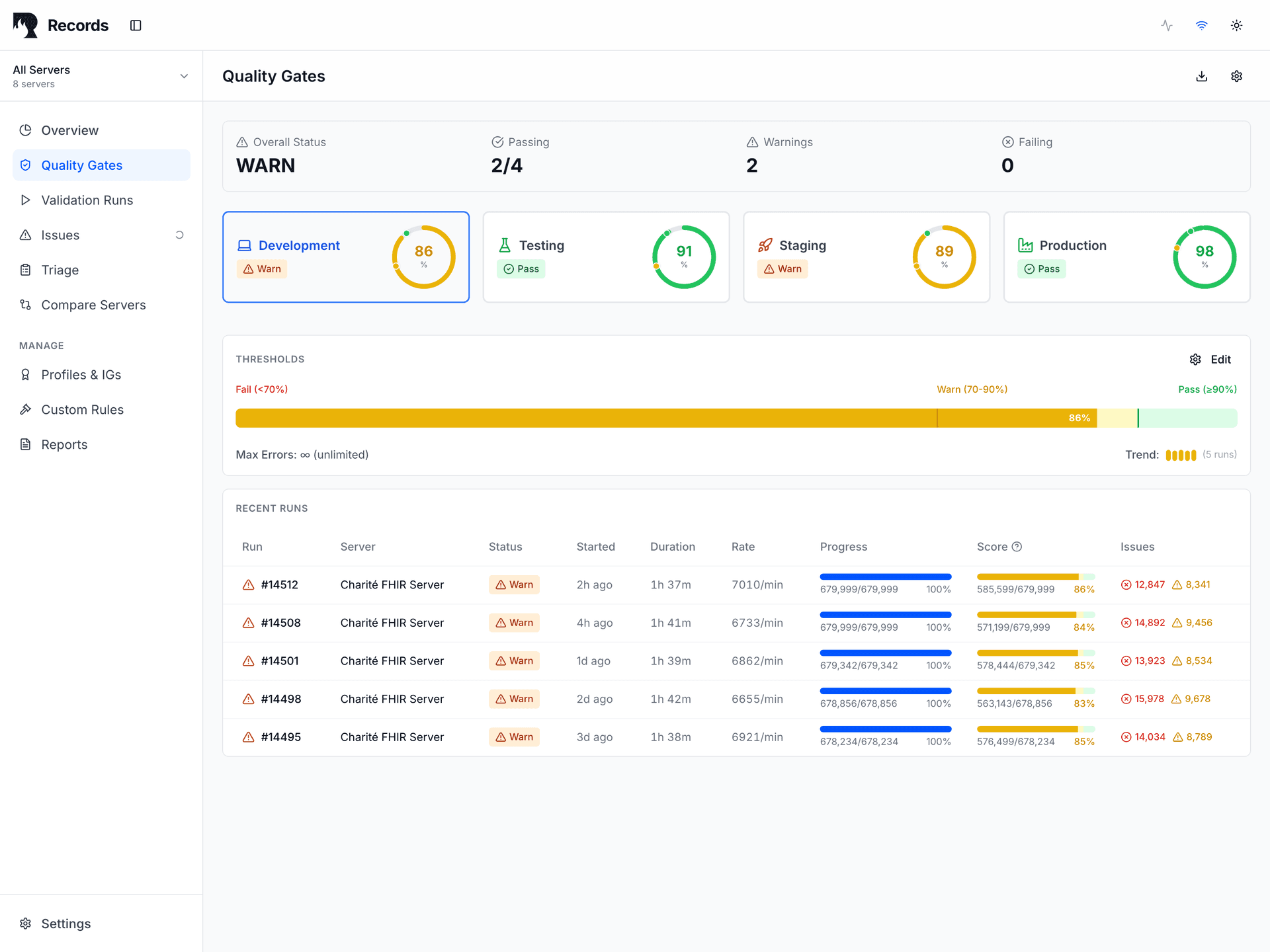

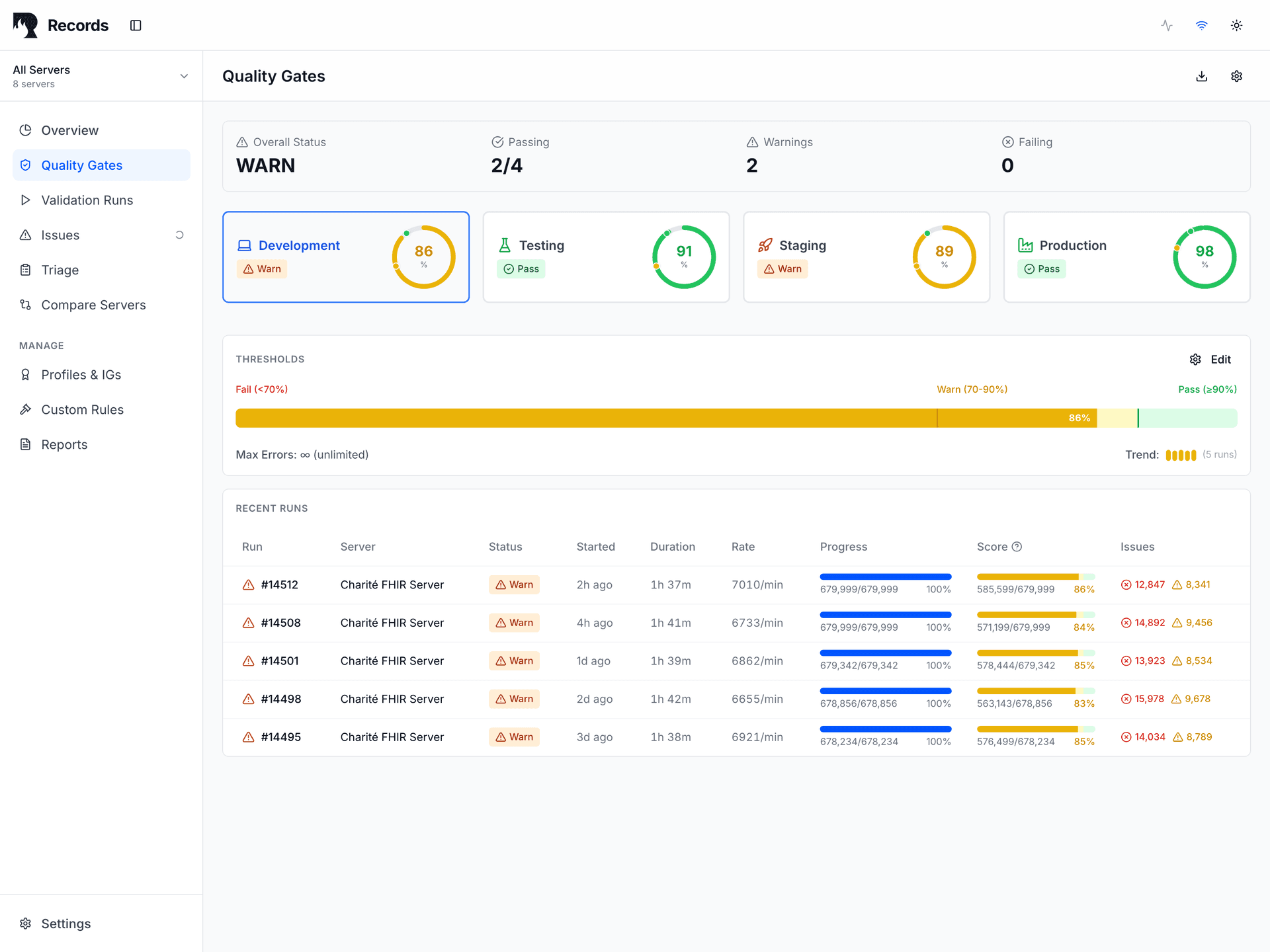

Release Safety Gate

Validate before pushing an IG/Profile update, FHIR server upgrade, or mapping change to production.

What Records does

Four things Records does for your FHIR infrastructure.

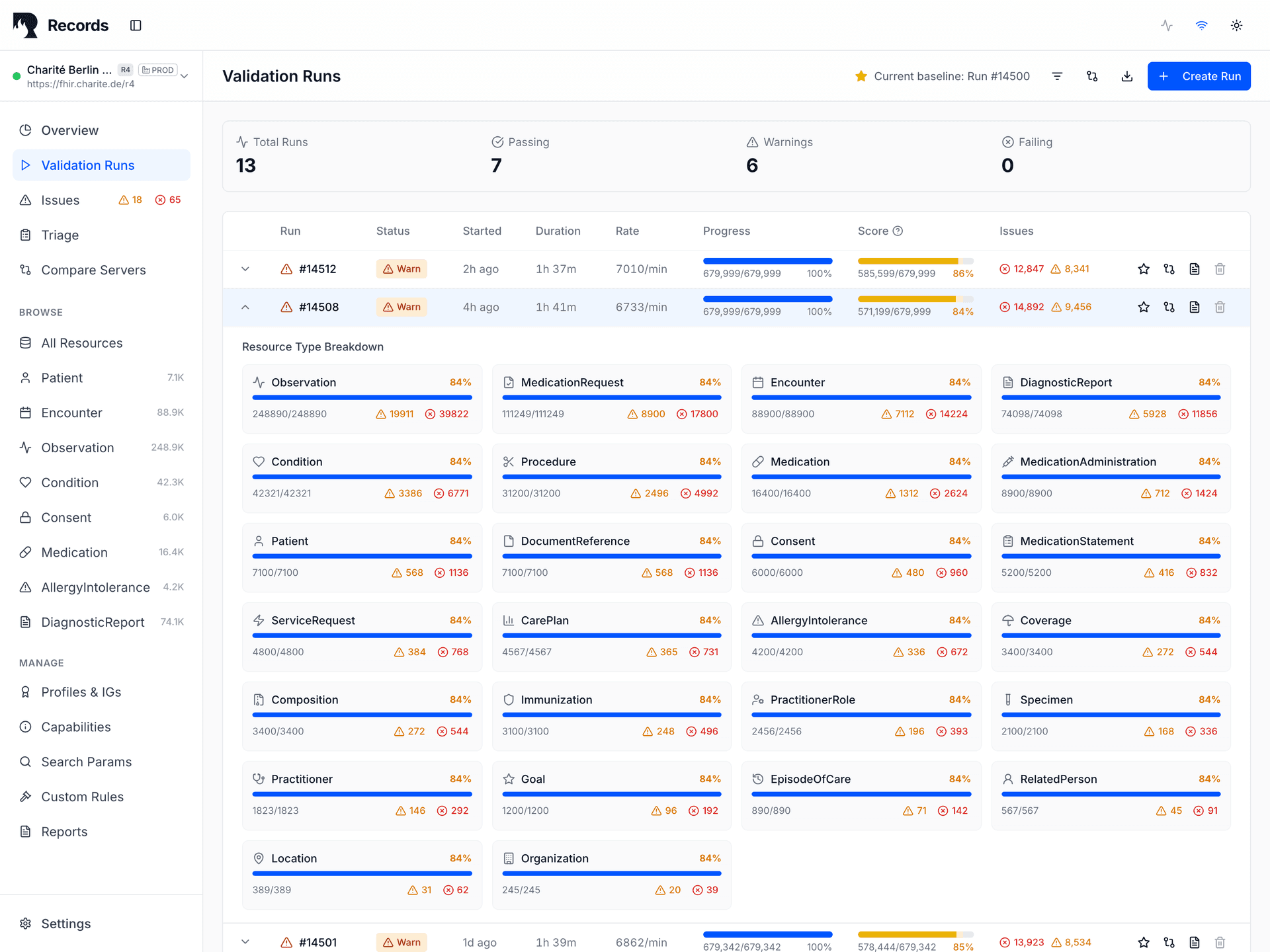

Validates continuously

Runs profiles, terminologies, and custom rules against live FHIR data. Every run produces a deterministic PASS / WARN / FAIL signal.

Detects drift

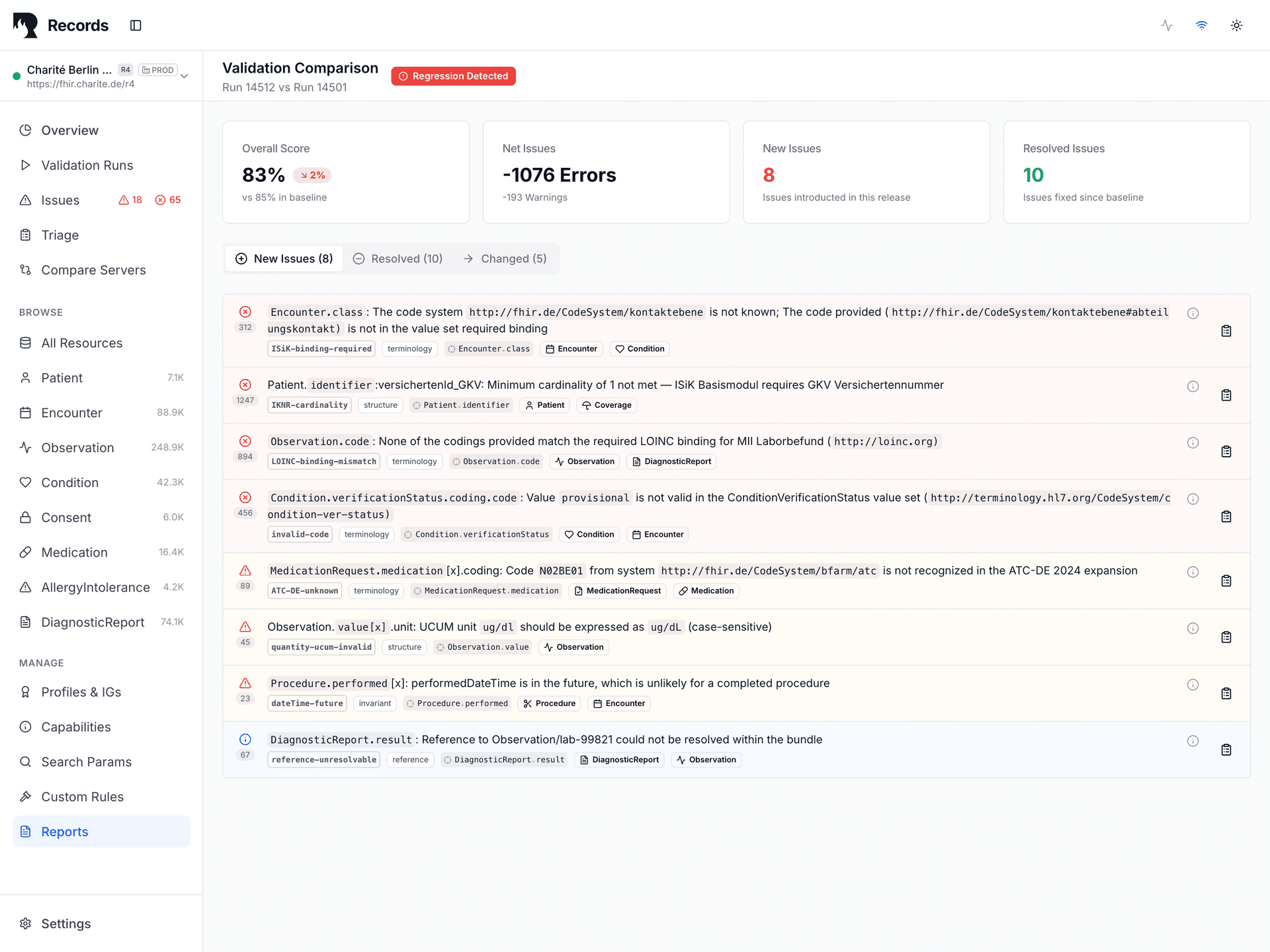

Compares each validation run against baselines. Surfaces regressions from server upgrades, profile revisions, terminology changes, and pipeline modifications.

Produces evidence

Generates reproducible, timestamped proof for audits, release gates, vendor acceptance, and regulatory handovers.

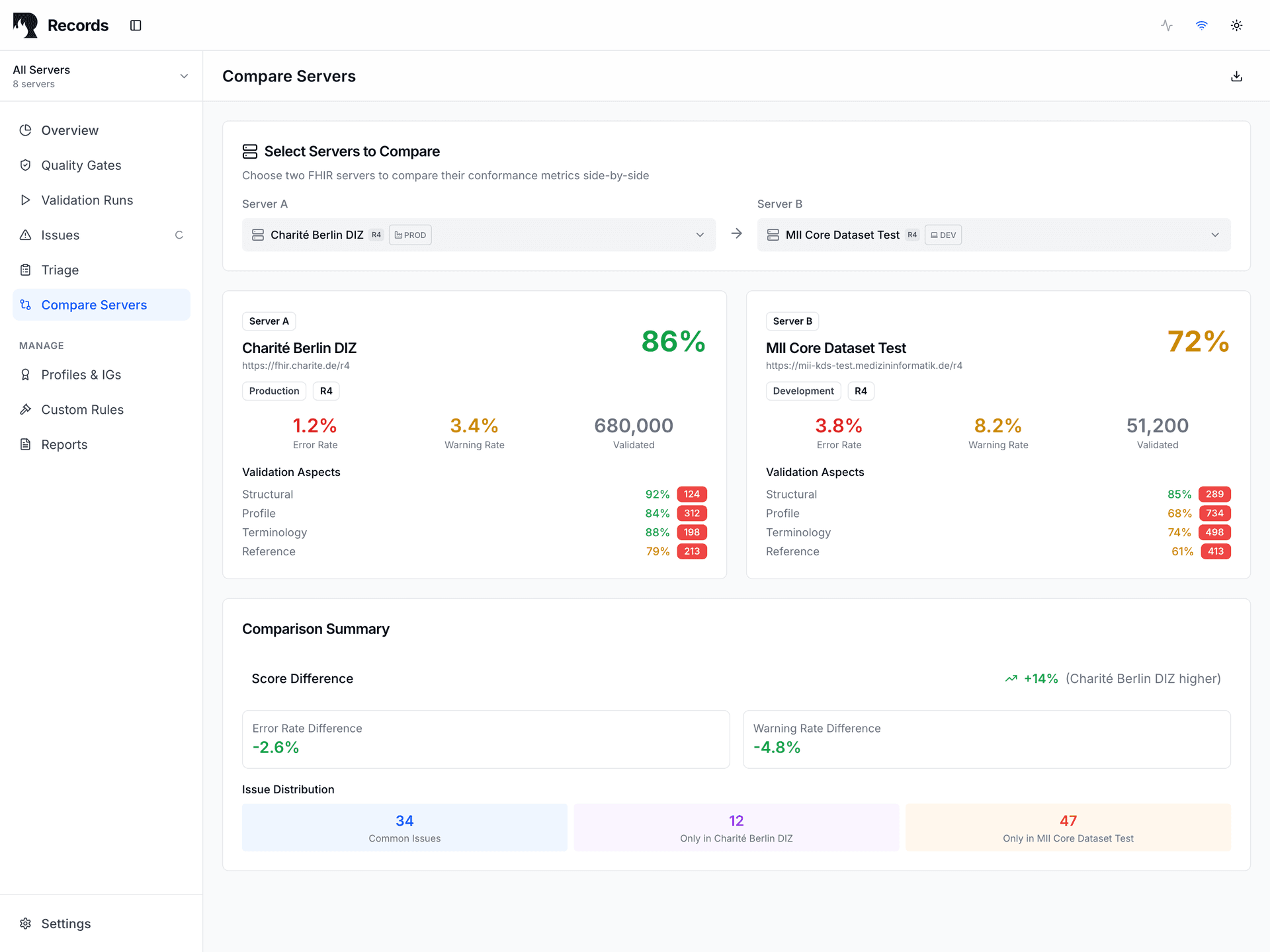

Manages environments

Tracks validation state across dev, staging, and production. Compare signals across environments before promoting changes.

Why evidence matters

The core diagnostic question is not “Is our data valid?” but “Will we know when it stops being valid?”

Conformance at deployment time proves nothing about conformance tomorrow. At least seven distinct drift vectors can silently degrade data quality after initial validation:

| Drift Vector | Example | Detection Window |

|---|---|---|

| Terminology server update | CodeSystem version change alters ValueSet memberships | Days to weeks |

| IG/Profile revision | New constraints added or cardinality changed | Release cycle |

| FHIR server upgrade | HAPI v6.3→v6.4 changes validation behavior | Immediate |

| Mapping pipeline change | ETL logic drift alters output structure | Hours to days |

| Environment config divergence | Dev uses R4@1.4.0, Prod uses R4@1.5.0 | Silent |

| Data volume shift | Edge cases emerge at scale that never appeared in testing | Weeks |

| Dependency chain update | Transitive profile dependency changes upstream | Silent |

Without continuous validation, these vectors compound silently. Records makes each one detectable.

How it fits your infrastructure

Records adds an observation layer. It doesn't build a silo.

Records IS

- • A validation control plane

- • A drift detection engine

- • A release evidence surface

- • An environment comparison tool

- • A vendor-neutral observer

- • Adjacent to your existing stack

Records is NOT

- • A CDR or storage platform

- • An EHR or clinical application

- • A BI or analytics system

- • A compliance certification tool

- • A system of record

- • A decision-making authority

Clear responsibility boundaries

Your FHIR server stores data. Records observes it.

| Responsibility | ||

|---|---|---|

| Data storage | ||

| Data access control | ||

| Clinical workflows | ||

| Validation evidence | ||

| Drift detection | ||

| Release gates | ||

| Baseline management |

Deterministic Signals

Every validation run produces one of three signals. Records produces signals — you decide and act.

All thresholds met

Operator: proceed with confidence

Non-critical thresholds breached

Operator: proceed with investigation

Critical thresholds breached

Operator: investigate before proceeding

Determinism & Reproducibility

Same inputs produce same outputs. Evidence is comparable across time, environments, and teams.

This is the reproducibility contract — seven inputs must be identical for a validation run to be considered strictly comparable:

| Input | Why it matters |

|---|---|

| FHIR endpoint URL | Identifies the data source |

| Profile set + version | Determines validation rules |

| Terminology server state | Resolves code bindings |

| Validator version | Engine behavior determinism |

| Run configuration | Thresholds, exclusions, scope |

| Environment label | Isolation and comparison context |

| Timestamp | Point-in-time data snapshot |

When to Use Records

Five concrete scenarios where Records produces the evidence you need.

Release Safety Gate

Validate before pushing an IG/Profile update, FHIR server upgrade, or mapping change to production.

Drift & Regression Detection

After changes land, compare current validation state against your baseline to catch new errors immediately.

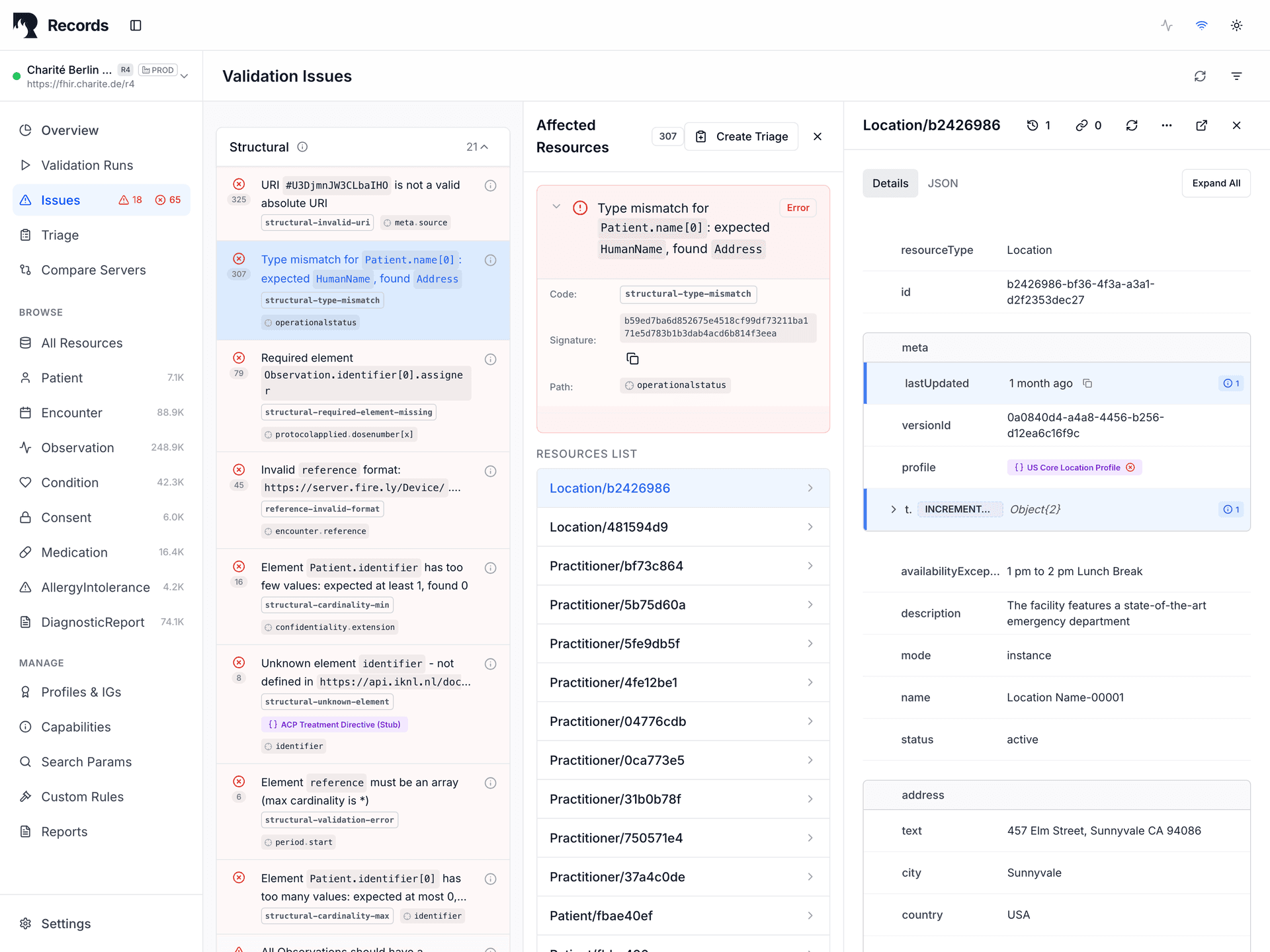

Deep-Dive Investigation

Instantly drill down from high-level metrics to specific JSON resources. See the exact line causing the error with contextual highlighting.

Acceptance & Handover Evidence

When a vendor delivers or a system migrates, produce auditable proof of validation state.

Multi-Server Comparability

Compare validation state across federated servers to identify alignment gaps before integration.

The Operating Model

Records operates continuously, not episodically. Validation runs on every change, not quarterly audits.

The release-safety loop is the core operational cycle:

Each step in the loop produces traceable evidence. Baselines establish known-good states. Runs produce signals. Deltas quantify change. Alerts notify. Triage assigns ownership. Fixes resolve. Re-baselining closes the loop and starts the next cycle. The operator controls every transition.

Technical Surface

What goes in, what comes out, and why it matters.

Inputs

- FHIR EndpointOne or more server URLs — Records reads via GET/HEAD only

- Profile Set + VersionStructureDefinitions that define your conformance target

- Terminology StateCodeSystem/ValueSet bindings from your terminology server

- Run ConfigurationThresholds, exclusions, environment label, baseline reference

Outputs

- SignalsDeterministic PASS / WARN / FAIL per validation run

- DeltasNew errors, resolved warnings, and regressions vs. baseline

- Evidence MetadataRun ID, timestamp, config fingerprint, environment, profile version

- Conformance ScorePercentage-based quality indicator per resource type and environment

See it on your own data.

We'll connect Records to your FHIR server and run a validation — live, in 30 minutes. No slides, no sandbox.