Drift & Regression Detection

Your FHIR APIs passed certification. What happens in year two when ISiK stages advance, Da Vinci IGs update, terminology servers drift, and audits arrive?

For: MII data integration centers, payer FHIR program leads, EHR interop teams

The problem

Conformance is validated once. Then it drifts — silently.

ISiK stages advance. MII Kerndatensatz profiles evolve. gematik tightens constraints across TI-connected systems. In the US, CMS-9115-F Patient Access and CMS-0057-F Prior Authorization APIs must stay conformant over years of Da Vinci IG updates, USCDI version changes, and terminology expansions. But conformance is typically validated once — at go-live or certification — and never checked again.

Meanwhile, the systems around your FHIR APIs keep changing: a terminology server update changes 200 codings, a Da Vinci PAS profile tightens cardinality, an ISiK Basismodul profile adds a must-support element, a vendor pushes a mapping fix that introduces new reference patterns. Nobody notices for weeks — until an audit, a failed integration, or a CMS review surfaces the problem.

Drift is the gap between your validated baseline and the current reality. Without continuous comparison, you don't know what you don't know.

How Records solves it

Continuous delta comparison against your known-good baseline.

Conformance validated at go-live proves nothing about conformance six months later. Seven distinct drift vectors silently degrade data quality: integration changes, mapping evolution, terminology updates, IG version updates, server upgrades, client behavior shifts, and validator variability. Most take days to months to surface without continuous monitoring — by then the damage is in production.

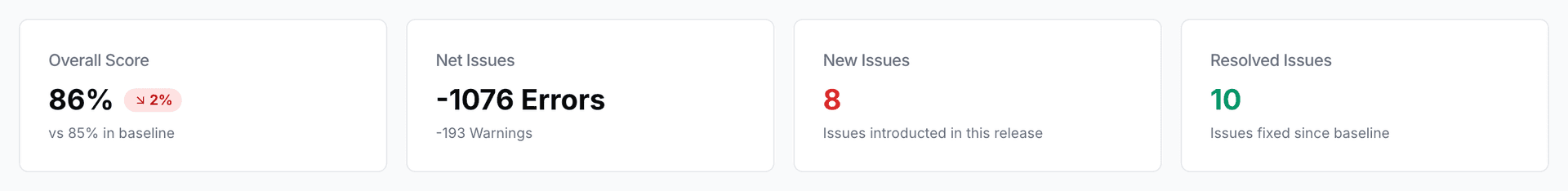

Records implements the core operational loop: Baseline → Continuous Runs → Delta/Drift Detection → Alert → Triage → Fix → Re-run → Re-baseline. Each scheduled run compares against the established baseline. When the delta exceeds configured thresholds, an alert fires. Every step in the loop produces traceable, reproducible evidence.

The overview dashboard tracks all validation runs. Per-run KPIs — resources validated, coverage, error and warning counts — show whether conformance is stable, improving, or degrading.

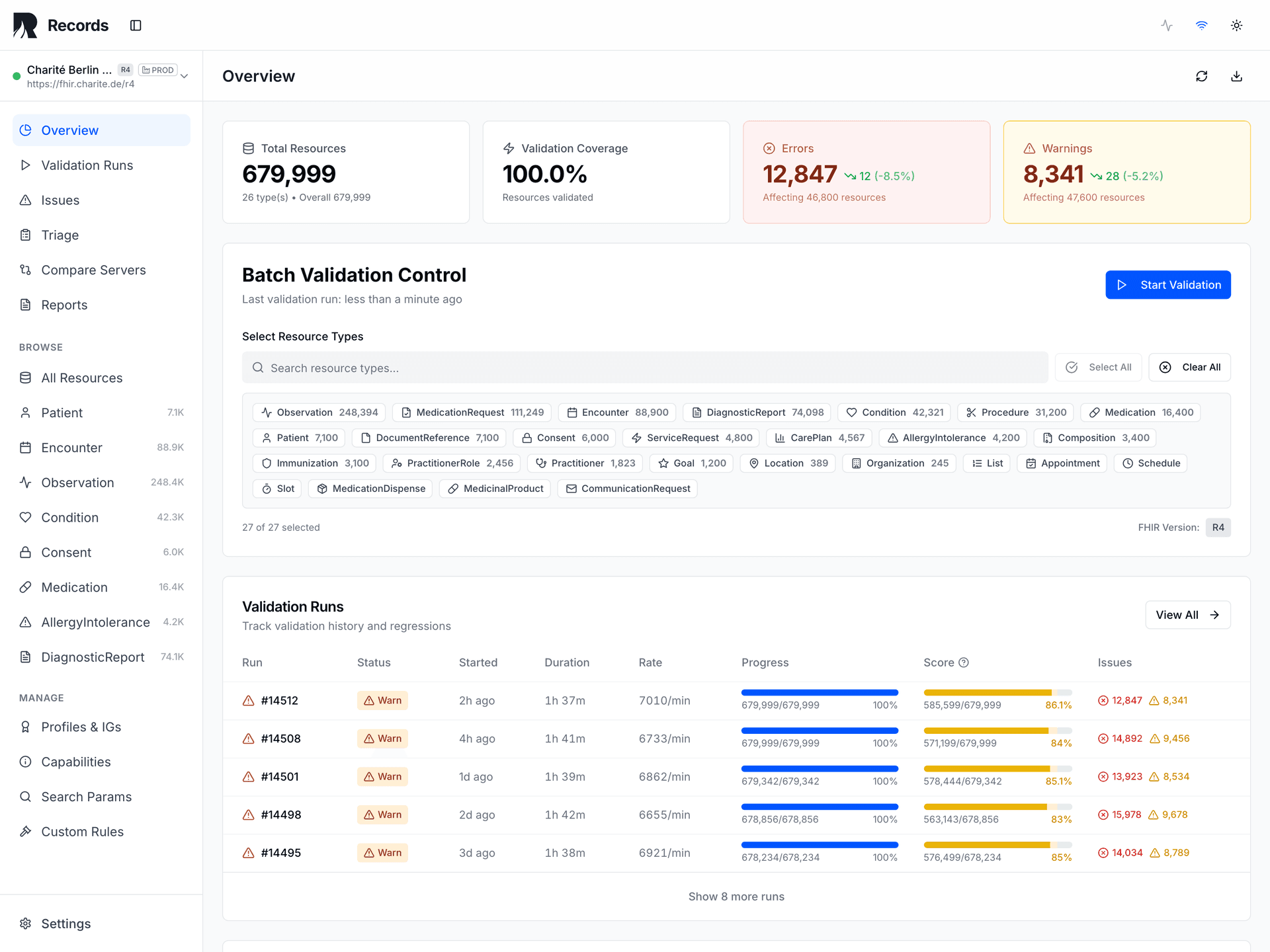

When drift exceeds thresholds, drill into specific issues and create triage actions — from signal to field-level root cause in seconds.

7 drift vectors

Integration changes, mapping evolution, terminology updates, IG version updates, server upgrades, client behavior shifts, validator variability — all detectable.

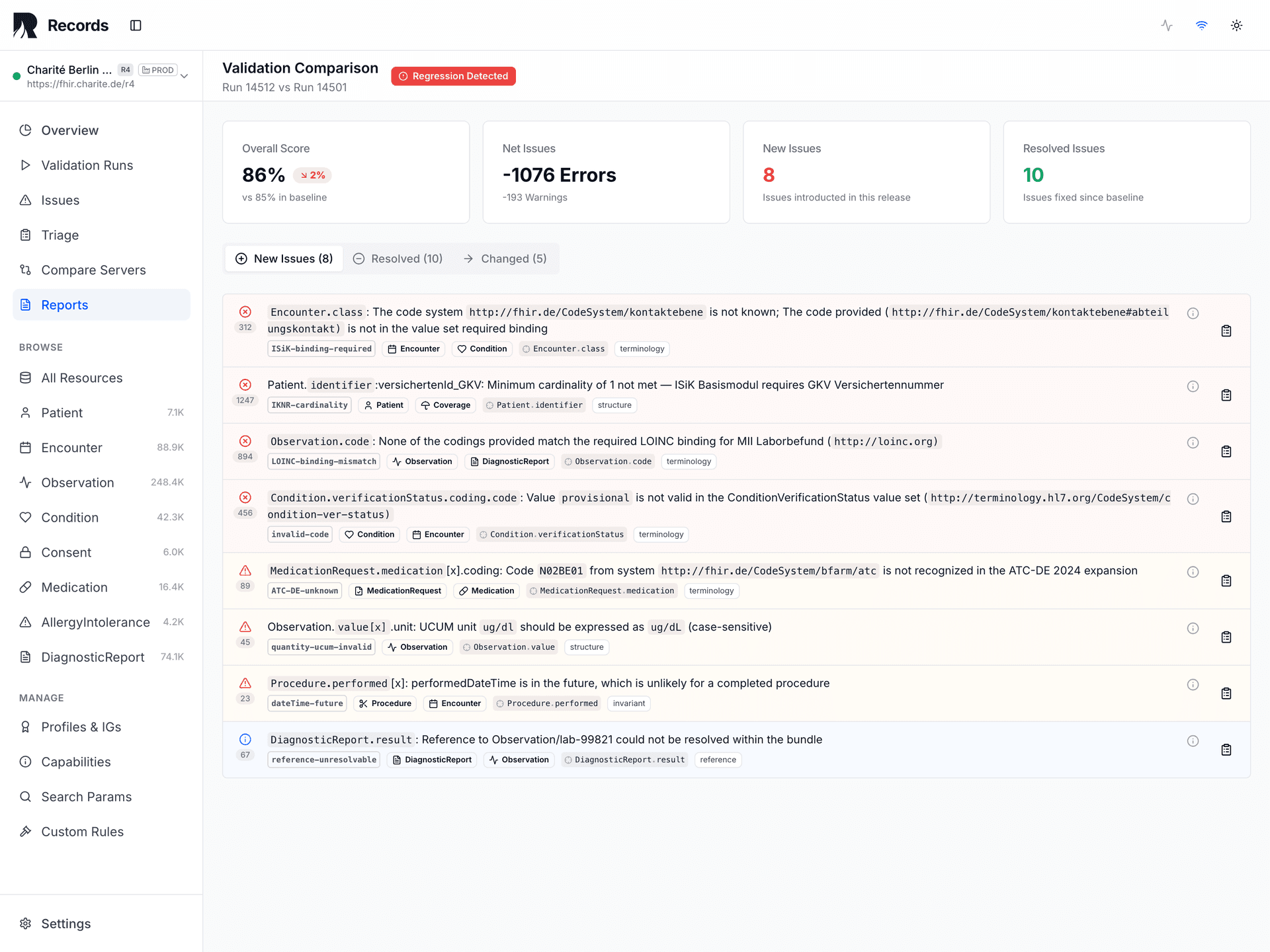

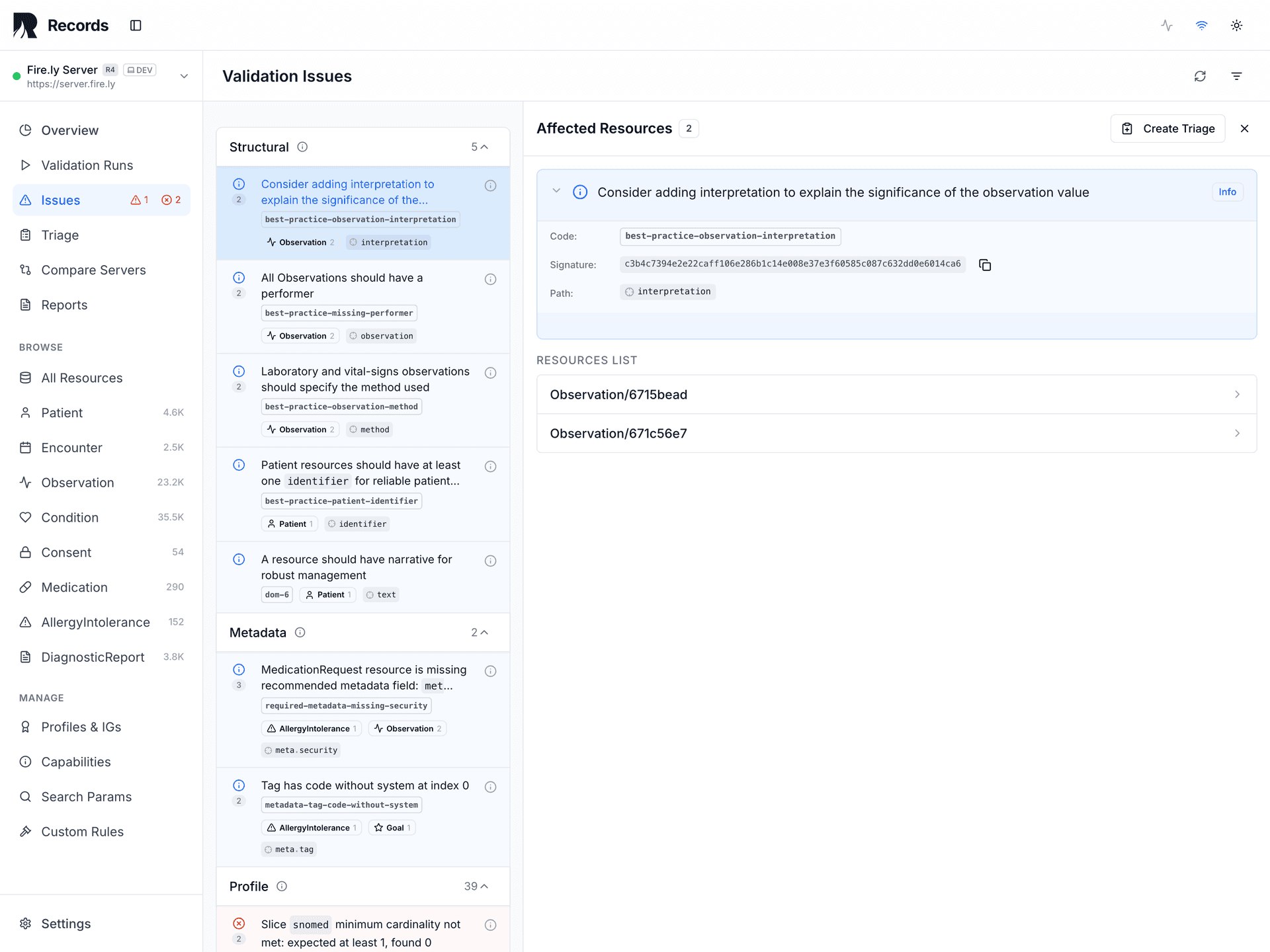

Delta reports

Every run produces a side-by-side diff against the baseline. New errors, resolved errors, and unchanged issues are classified separately.

Threshold-based alerts

Configure error and warning thresholds per environment. Alerts fire when drift exceeds acceptable bounds.

Core loop

Baseline → run → compare → alert → triage → fix → re-run → re-baseline. Each step produces traceable evidence.

How to integrate

Run drift detection against your production FHIR API on a schedule.

Scheduled cron (e.g., nightly)

records validate \

--server=<production-fhir-api> \

--ig=hl7.fhir.us.davinci-pas \

--ig=hl7.fhir.us.carin-bb \

--baseline=latest \

--alert-on-deltaWhat gets pinned

| Pin | Purpose |

|---|---|

validator_version | Ensures drift is in the data, not the engine |

ig_packages | Profile versions pinned so IG updates are deliberate |

terminology_snapshot | Detects when code system changes cause new errors |

environment_label | Separates production monitoring from staging tests |

For MII sites: schedule against your FHIR façade to track conformance across Kerndatensatz profiles. For payers: run as a cron job against your production Da Vinci / CARIN endpoints — delta reports serve as CMS audit evidence. For EHR vendors: add to staging CI so drift is caught before promotion to production.

What you get

Run comparison

Side-by-side diff of any two validation runs — see exactly what changed and when.

Drift alerts

Alerts for terminology updates, IG version changes, upstream payload changes, and validator variability.

Audit-ready evidence

Evidence Reports for CMS-0057-F, ISiK conformance audits, and regulatory responses with full reproducibility contract.

Root-cause drill-down

From delta to specific resources and field-level errors — find root cause in seconds.

Core operational loop

Baseline, run, compare, alert, triage, fix, re-run, re-baseline — each step produces evidence.

Triage lifecycle

Every drifted issue tracked from Open through Acknowledged, In Progress, Verified, and Closed.

What Records detects that a FHIR validator cannot

$validate checks a single resource at a point in time. Records monitors datasets across time.

| Capability | FHIR Validator ($validate) | Records |

|---|---|---|

| Single-resource validation | ||

| Dataset-level validation | full dataset against all configured profiles | |

| Regression tracking | full history with delta comparison | |

| Anomaly detection | statistical outlier identification across resources | |

| Drift alerting | threshold-based alerts on schedule | |

| Evidence reports | audit-grade PDFs with reproducibility contract |

Frequently asked questions

Any change in validation results between runs without an intentional configuration change. This includes new errors from terminology server updates, changed constraint behavior from IG updates, upstream payload changes from feeding systems, and validator behavior changes from engine upgrades.

Depends on your risk tolerance. Payer FHIR APIs under CMS-0057-F obligations typically run nightly. Health-tech platforms run on every staging deployment. Records supports both cron-scheduled and event-triggered runs.

Integration changes, mapping evolution, terminology updates, IG version updates, server upgrades, client behavior shift, and validator variability. Each has a different detection window — some are immediate (server upgrades), others are silent for weeks or months (terminology drift, dependency chain updates).

By comparing against a known-good baseline with a pinned reproducibility contract. If the validator version, IG packages, and terminology snapshot are identical, any difference in results is attributable to data drift — not tooling changes.

No. Records stores resource IDs, validation outcomes, issue counts, and run metadata. Clinical content and patient data are never stored.

Talk to us about drift detection.

Share your context, question, or integration idea. We'll help you identify the right next step.