Regulatory & Audit Evidence

Produce reproducible, timestamped quality evidence for regulatory submissions and program-acceptance gates.

For: Clinical data managers, GxP/QA leads, regulators, compliance officers

The problem

Regulators require evidence. You have assertions.

A pharma sponsor submits a regulatory dossier to the EMA or FDA. The study used EHR-sourced FHIR data — but the data arrived non-conformant from multiple sites, with no quality gate at ingest. ICH E6(R3) and 21 CFR Part 11 require reproducible data-quality evidence. You have none.

A regulator or data-access intermediary — a BfArM DACO, an HIE, a QHIN — needs to accept a new data supplier. There is no objective, comparable quality signal across the servers they govern. Acceptance decisions are based on vendor self-attestation, not independent measurement.

In both cases, the problem is the same: no reproducible, timestamped evidence of FHIR data quality at the moment it matters — ingest, submission, acceptance.

How Records solves it

Reproducibility-contracted evidence for every submission and acceptance gate.

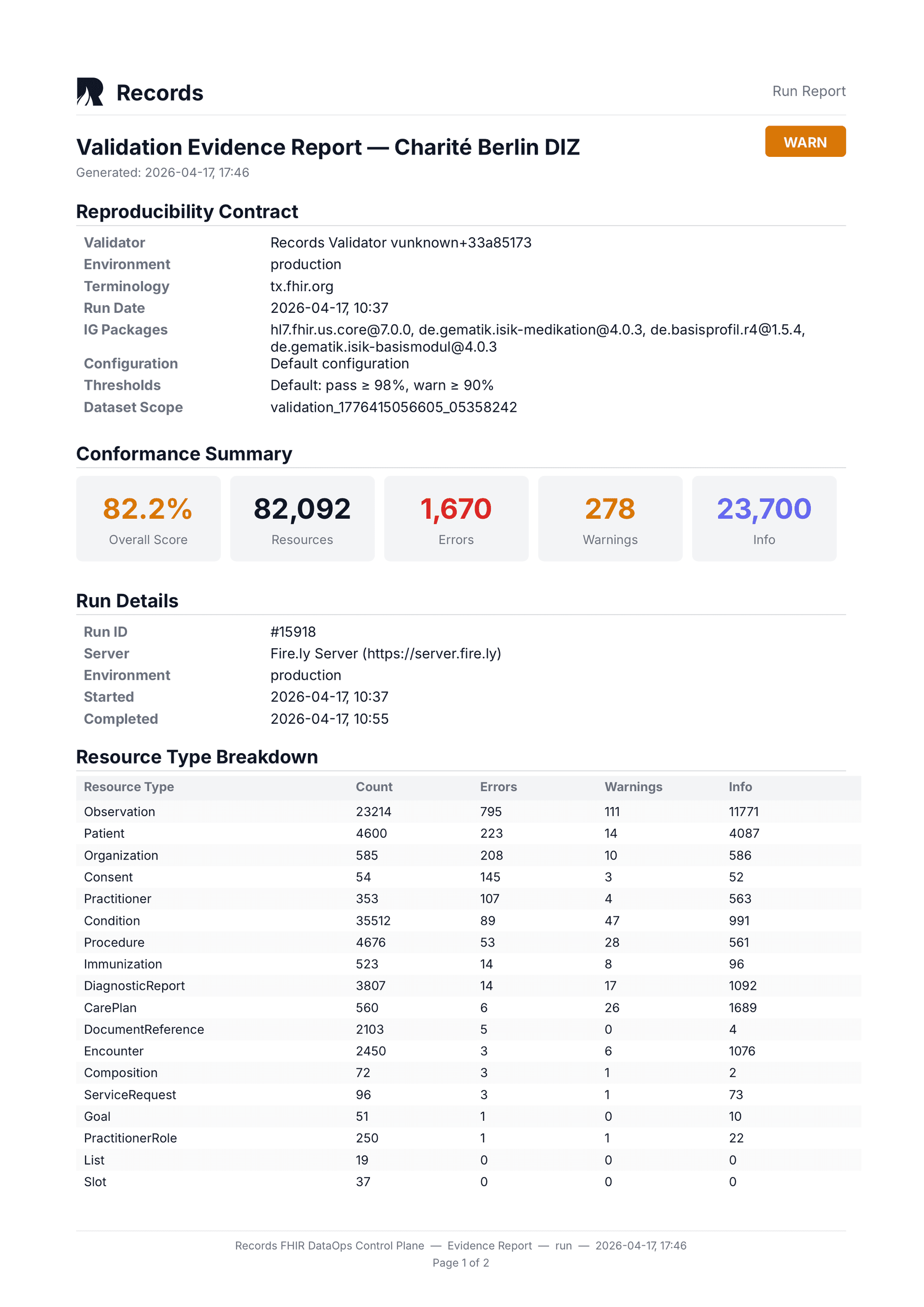

For regulatory submissions under ICH E6(R3) and 21 CFR Part 11, evidence must be reproducible. Records pins eight fields in every validation run: validator tool, validator version, validator config, IG packages, terminology source, terminology snapshot, environment label, and thresholds applied. Given the same inputs, the same results are produced — today, next month, or during an audit three years later. This is the reproducibility contract.

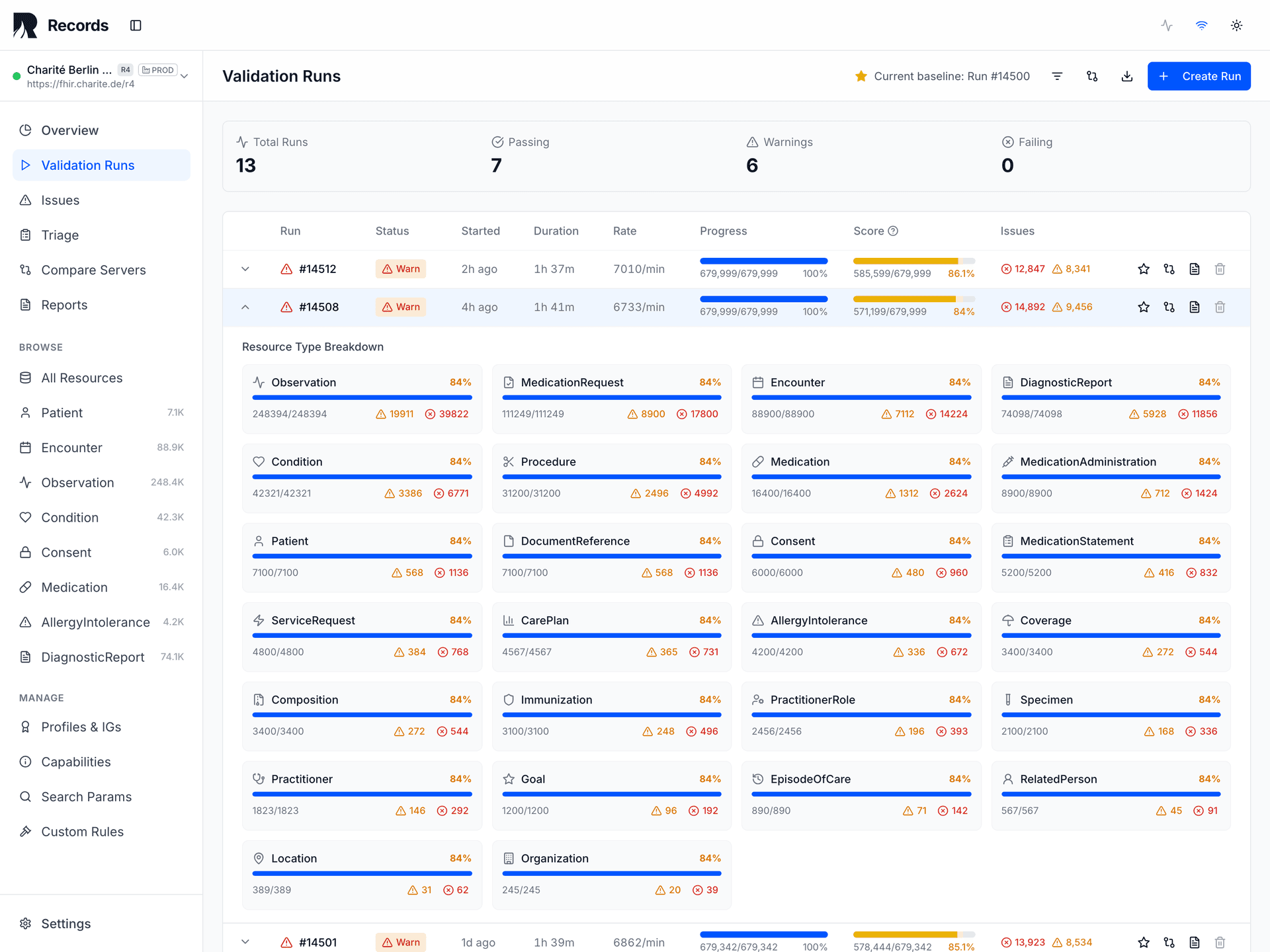

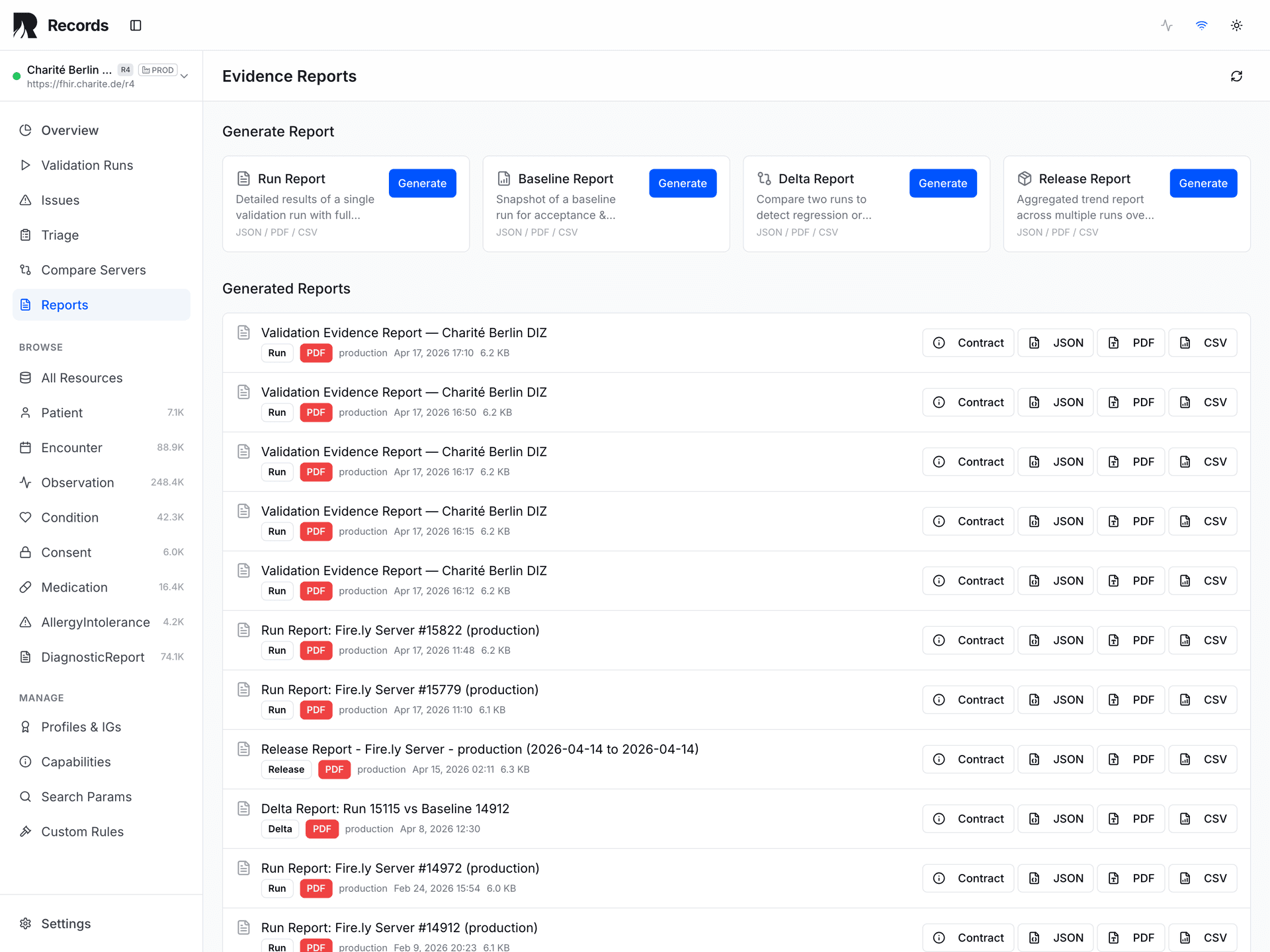

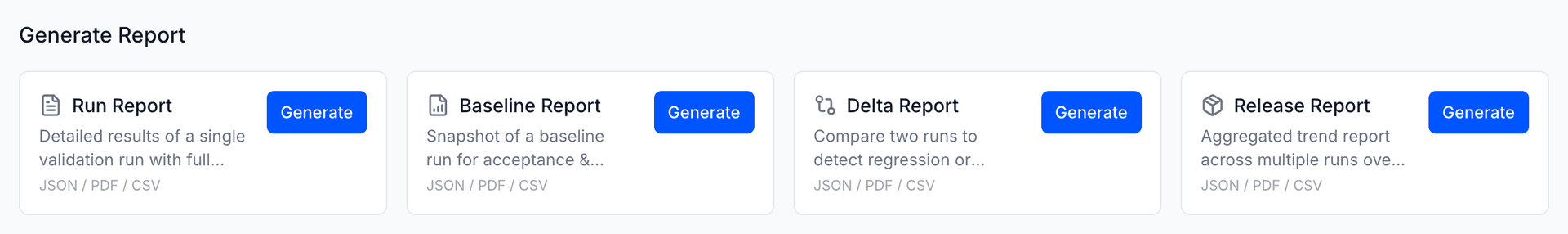

Records produces five evidence report types, each serving a distinct regulatory need: Run Reports capture a single validation snapshot. Baseline Reports establish a known-good reference state. Delta Reports compare two runs to surface regressions. Release Reports aggregate evidence for a deployment gate decision.

A fifth type — the Dataset Quality Report — aggregates cross-resource quality metrics including profile coverage and anomaly summaries for study-level or program-level governance.

The evidence PDF includes the full reproducibility contract: validator tool, version, config, IG packages, terminology source, snapshot, environment, and thresholds. Every report carries a cryptographic content hash (SHA-256). Given the same inputs, the same results are produced — today, next month, or during an audit three years later.

Reproducibility contract

8 pinned fields guarantee identical inputs produce identical results. Replayable for audits years after the original run.

5 evidence report types

Run, Baseline, Delta, Release, and Dataset Quality reports — each serving a distinct regulatory or audit purpose.

SHA-256 integrity

Every evidence report carries a cryptographic content hash. Tamper detection is built into the output, not bolted on.

Cross-site comparability

Multi-center trials and multi-supplier programs get per-site quality signals on the same measurement scale.

How to integrate

Generate audit-grade evidence at study lock, site ingest, or supplier acceptance.

Pharma: validate at site ingest

records validate \

--server=<site-edc-fhir> \

--ig=<study-profile> \

--lock=validation.lock \

--report=evidence-pdfRegulator: validate supplier

records validate \

--server=<supplier-fhir-api> \

--ig=<program-ig> \

--report=evidence-pdfThe reproducibility contract

| Pin | Purpose |

|---|---|

| validator_tool | Identifies the validation engine |

| validator_version | Engine behavior determinism |

| validator_config | Strictness settings, enabled aspects |

| ig_packages | Profile set + version locked |

| terminology_source | Terminology server or local package |

| terminology_snapshot | Point-in-time code system state |

| environment_label | Site / center identification |

| thresholds_applied | Pass/fail criteria |

For pharma sponsors: integrate at the EHR-to-EDC ingest pipeline. Use --lock=validation.lock for full lock-file pinning. Evidence PDFs attach directly to the submission dossier. For regulators: deploy on-premise via Docker for air-gapped data governance environments.

What you get

Reproducibility contract

Same inputs produce same results — replayable for audits years later.

Evidence PDF

Pinned validator version, IG packages, terminology snapshot, and thresholds in every report.

Root-cause drill-down

From evidence summary to specific resources and field-level errors.

Cross-site comparability

Multi-center trials and multi-supplier programs on the same measurement scale.

Lock-file pinning

Every dependency version frozen via validation.lock for reproducibility across months and years.

5 evidence report types

Run, Baseline, Delta, Release, and Dataset Quality — each structured for its regulatory context.

Evidence vs. assertions

Vendor self-attestation is not reproducible evidence.

| Self-attestation | Records evidence | |

|---|---|---|

| Reproducibility | Not guaranteed | Guaranteed by 8-field contract |

| Integrity | SHA-256 hash on every report | |

| Comparability | Vendor-specific | Vendor-neutral, cross-site comparable |

| Auditability | Verbal or slide-based | Timestamped PDF with pinned configuration |

| Replayability | Cannot reproduce | Same inputs produce same results, years later |

Frequently asked questions

Records produces general-purpose validation evidence that is relevant to ICH E6(R3), 21 CFR Part 11, and program-acceptance gates. Records does not claim regulatory certification — it produces the evidence that supports your compliance arguments.

Eight fields that must be identical for a validation run to be considered strictly reproducible: validator tool, validator version, validator config, IG packages, terminology source, terminology snapshot, environment label, and thresholds applied. Records pins all eight and includes them in every evidence report.

Yes. Given the same lock file and the same FHIR data, Records produces identical results. The lock file freezes all dependency versions. This is the mechanism that makes evidence auditable years after the original run.

No. Records produces evidence signals — PASS, WARN, or FAIL. Governance stakeholders (clinical data managers, regulators, quality leads) make all acceptance and compliance decisions.

Yes. Records runs the same validation with the same reproducibility contract against each site's FHIR server. The per-site reports are directly comparable because the measurement parameters are identical.

Talk to us about regulatory evidence.

Share your context, question, or integration idea. We'll help you identify the right next step.